https://www.google.co.in/search?hl=en&newwindow=1&client=firefox-a&rls=org.mozilla%3Aen-US%3Aofficial&biw=1025&bih=467&q=synthetic+telepathy+research&oq=synthetic+telepathy+&gs_l=serp.3.2.0l2j0i20j0l7.17519.18896.0.26355.8.8.0.0.0.0.373.797.5j2j0j1.8.0...0.0...1c.1.7.serp.Q1fjUn4loZ4

http://io9.com/5038464/army-sinks-millions-into-synthetic-telepathy-research

http://phys.org/news137863959.html

http://www.mindpowerworld.com/synthetic-telepathy-mind-reading-technology

http://en.wikipedia.org/wiki/Brain%E2%80%93computer_interface

http://cnslab.ss.uci.edu/muri/research.html

http://deepthought.newsvine.com/_news/2010/05/21/4322682-synthetic-telepathy-the-hidden-truth

http://drowninginabsurdity.wordpress.com/2012/11/24/artificialsynthetic-telepathy-and-mind-control-2/

http://www.indymedia.org.uk/en/2010/05/451768.html

http://en.wikipedia.org/wiki/List_of_institutions_granting_degrees_in_cognitive_science#India

http://en.wikipedia.org/wiki/Humboldt-University_of_Berlin

http://en.wikipedia.org/wiki/Max_Planck_Institute_for_Human_Cognitive_and_Brain_Sciences

http://www.bio.uni-freiburg.de/

http://www.bbci.de/contact

http://www.uni-heidelberg.de/studium/interesse/faecher/biomed-eng.html

http://www.manyagroup.com/top-universities-in-germany

http://www.ehow.com/list_6510286_top-engineering-universities-germany.html

http://www.ehow.com/list_6603559_list-technical-universities-germany.html

Artificial/Synthetic Telepathy And Mind Control

By Lily Morgan, March 31, 2012

Special thanks to Lily Morgan for letting me re-post this here. Technologies that violate the sacred space of an individual’s mind are more prolific than people realize. Becoming familiar with these technologies is the first step to recognizing their pattern of influence—not just as they may apply to yourself or friends and family, but also in the wider net of Perception Management throughout the alternative and new-age community as well. Uses and abuses of this technology are no longer reserved for unaware military and/or black op(s) employees, or MILAB abductees. This form of victimization is heinous, cruel, and wrong at every level of human decency. The original article can be found at the following link: http://emvsinfo.blogspot.de/2012/03/artificialsynthetic-telepathy-and-mind.html

The full article is below. –Crystal Clark, November 24th, 2012

In

order to have an understanding of my personal observation of my own

experience with Mind Control and Synthetic Telepathy it is vital that

the reader familiarizes themselves with some background information and

technical details on the development of Mind Control and Electromagnetic

Weapons. I ask this because these technologies can and are being used

against TI’s (Targeted Individuals) and whole populations on a global

scale, if not currently then plans for the future are being prepared,

tested and perfected. As such, the first part of this article is devoted

to providing quotes from other people’s research and their personal

experiences. Had I not already spent some time making myself aware of

these subjects, when I became a TI myself, I would not have had a

knowledge base to eventually build a picture of what I was consciously

aware of at the time, as it was happening to me. The consequences of the

application of these technologies in the wrong hands are horrifying and

inhumane.

In

order to have an understanding of my personal observation of my own

experience with Mind Control and Synthetic Telepathy it is vital that

the reader familiarizes themselves with some background information and

technical details on the development of Mind Control and Electromagnetic

Weapons. I ask this because these technologies can and are being used

against TI’s (Targeted Individuals) and whole populations on a global

scale, if not currently then plans for the future are being prepared,

tested and perfected. As such, the first part of this article is devoted

to providing quotes from other people’s research and their personal

experiences. Had I not already spent some time making myself aware of

these subjects, when I became a TI myself, I would not have had a

knowledge base to eventually build a picture of what I was consciously

aware of at the time, as it was happening to me. The consequences of the

application of these technologies in the wrong hands are horrifying and

inhumane.

Artificial

Made or produced by human beings rather than occurring naturally, typically as a copy of something natural.

Telepathy

“Telepathy” is derived from the Greek terms tele (“distant”) and pathe (“occurrence” or “feeling”). The term was coined in 1882 by the French psychical researcher Fredric W. H. Myers, a founder of the Society for Psychical Research (SPR).

Mind Control

Mind control (also known as brainwashing, coercive persuasion, mind abuse, thought control, or thought reform) refers to a process in which a group or individual “systematically uses unethically manipulative methods to persuade others to conform to the wishes of the manipulator(s), often to the detriment of the person being manipulated”

Mind Rape

When Mind reading and mind control are used against a person it is sometimes refered to as mind rape. The reason is that in general mind reading is not used to observe but instead to control a person in illegal ways or to inflict maximum damage (including death) to a person. Mind rape occurs whenever one’s brain feels as though it has been assaulted viciously by some event or thing in reality and when someone can convince and manipulate someone’s thoughts and therefore their actions.

Love Bombing

“Mind-control techniques such as love-bombing are designed to bypass a person’s intelligence and especially his critical-thinking skills. When a person suddenly receives an overwhelming amount of love and acceptance, it is extremely difficult for them to stand back and assess the reasons for this or question something they desperately don’t want to have disappear.” Love Bombing is a technique widely used to initially entice, and then to later control and manipulate.

MIND CONTROL WEAPON

The term “Mind control” basically means covert attempts to influence the thoughts and behavior of human beings against their will (or without their knowledge), particularly when surveillance of an individual is used as an integral part of such influencing and the term “Psychotronic Torture” comes from psycho (of psychological) and electronic. This is actually a very sophisticated form of remote technological torture that slowly invalidates and incapacitates a person. These invisible and non-traceable technological assaults on human beings are done in order to destroy someone psychologically and physiologically. Actually, as per scientific resources, the human body, much like a computer, contains myriad data processors. They include, but are not limited to, the chemical-electrical activity of the brain, heart, and peripheral nervous system, the signals sent from the cortex region of the brain to other parts of our body, the tiny hair cells in the inner ear that process auditory signals, and the light-sensitive retina and cornea of the eye that process visual activity. We are on the threshold of an era in which these data processors of the human body may be manipulated or debilitated. http://www.cyberbrain.se/?page_id=112

Mind Control and Electromagnetic Weapons

Many Thanks for in-depth and invaluable information kindly quoted from these sites: http://www.stopeg.com/mindrape.html http://www.nwbotanicals.org/oak/newphysics/synthtele/synthtele.html I strongly recommend further reading as I have only been able to briefly touch upon some of the information available on the net with regards Mind Control.

General introduction to mind reading, mind control, mind rape

Mind control is a controversial subject most importantly because more disinformation has been released about mind control than with any other subject. Mind control is very real however and was performed by our national secret services yesterday and is performed by our national secret services today!

Mind control is about the controlling the mind and can have a lot of appearances. You might be controlled by subjective propaganda in your favorite news paper, or on your favorite television channel. But you may be brainwashed by certain drugs or voices beamed into your head because some people do not like you. A lot has been written on this subject. I quote parts of some books, reports, documents below, for more information just google the internet.

From THE RAPE OF THE MIND by Joost A. M. Meerloo:

The rape of the mind and stealthy mental coercion are among the oldest crimes of mankind. They probably began back in pre historic days when man first discovered that he could exploit human qualities of empathy and understanding in order to exert power over his fellow men. The word “rape” is derived from the Latin word _rapere_, to snatch, but also is related to the words to rave and raven. It means to overwhelm and to enrapture, to invade, to usurp, to pillage and to steal. The modern words “brainwashing,” “thought control,” and “menticide” serve to provide a clearer conception of the actual methods by which man’s integrity can be violated. When a concept is given its right name, it can be more easily recognized and it is with this recognition that the opportunity for systematic correction begins. From Terms Other Than Mind Control: One problem with the term “mind control” is the “kook” association. This association/stereotype is reinforced in some of the popular culture — as well as by certain victims (or provocateurs) who sound “crazy.” [There are cointelpro-style provocateurs who want to keep the real victims discredited, if possible, because they work as a damage control unit for the victimizers.] Many other people encountering the term “mind control” are just citizens who are purposely kept ignorant of the known and documented history of mind control — as well as the state of the technology right now.

From Terms Other Than Mind Control:

One problem with the term “mind control” is the “kook” association. This association/stereotype is reinforced in some of the popular culture — as well as by certain victims (or provocateurs) who sound “crazy.” [There are cointelpro-style provocateurs who want to keep the real victims discredited, if possible, because they work as a damage control unit for the victimizers.] Many other people encountering the term “mind control” are just citizens who are purposely kept ignorant of the known and documented history of mind control — as well as the state of the technology right now.

From Hearing Voices from the blog Artificial Telepathy

The problem is that artificial telepathy provides the perfect weapon for mental torture and information theft. It provides an extremely powerful means for exploiting, harassing, controlling, and raping the mind of any person on earth. It opens the window to quasi-demonic possession of another person’s soul.

When used as a “nonlethal” weapons system it becomes an ideal means for neutralizing or discrediting a political opponent. Peace protesters, inconvenient journalists and the leaders of vocal opposition groups can be stunned into silence with this weapon. Artificial telepathy also offers an ideal means for complete invasion of privacy. If all thoughts can be read, then Passwords, PIN numbers, and personal secrets simply cannot be protected. One cannot be alone in the bathroom or shower. Embarrassing private moments cannot be hidden: they are subject to all manner of hurtful comments and remarks. Evidence can be collected for blackmail with tremendous ease: all the wrongs or moral lapses of one’s past are up for review.

From Operation Mind Control (exerpts from) by Walter H. Bowart

The CIA succeeded in developing a whole range of psycho-weapons to expand its already ominous psychological warfare arsenal. With these capabilities, it was now possible to wage a new kind of war—a war which would take place invisibly, upon the battlefield of the human mind. … [p. 19]

Mind control is the most terrible imaginable crime because it is committed not against the body, but against the mind and the soul. Dr. Joost A. M. Meerloo expresses the attitude of the majority of psychologists in calling it ‘mind rape,’ and warns that it poses a great ‘danger of destruction of the spirit’ which can be ‘compared to the threat of total physical destruction . . .’ … [p. 23]

This technology has been around for many years in the U.S. A lot of people, have been used as guinea pigs for testing. It is a satellite delivered EEG. Many people, have lost everything as a result, family, employment,etc. The EEG was developed in 1920. BRAIN WAVE MONITORS / ANALYZERS (MIND THOUGHT DECIPHERING)

Lawrence Pinneo, a neurophysiologist and electronic engineer working for Stanford Research Institute (a military contractor) is the first “known” pioneer in this field. In 1974, he developed a computer system which correlated brain waves on an electroencephalograph with specific commands.

In the early 1990s, Dr. Edward Taub reported that words could be communicated onto a screen using the thought-activated movements of the computer cursor. How is it done?

The magnetic field around the head, the brain waves of an individual can be monitored by satellite. The transmitter is therefore the brain itself just as body heat is used for “Iris” satellite tracking (infrared) or mobile phones or bugs can be tracked as “transmitters.” In the case of the brain wave monitoring the results are then fed back to the relevant computers. Monitors then use the information to conduct “conversation” where audible Neurophone input is “applied” to the target / victim.

OTHER KNOW TECHNOLOGIES CURRENTLY UNDER SECRECY ORDERS BRAINWAVE SCANNERS / PROGRAMS: First program developed in 1994 by Dr. Donald York and Dr. Thomas Jensen. In 1994, the brain wave patterns of 40 subjects were officially correlated with both spoken words and silent thought. This was achieved by a neurophysiologist, Dr. Donald York, and a speech pathologist, Dr. Thomas Jensen, from the University of Missouri. They clearly identified 27 words, / syllables in specific brain wave patterns and produced a computer program with a brain wave vocabulary.

Using lasers / satellites, and high-powered computers, the agencies have now gained the ability to decipher human thoughts – a from a considerable distance. (instantaneously)

DESCRIPTION: As personal scanning and tracking system involving the monitoring of an individual EMF via remote means; e.g. Satellite. The results are fed to thought activated computers that possess a complete brainwave vocabulary.

PURPOSE: Practically, communication with stroke victims and brain-activated control of modern jets are two applications. However, more often, it is used to mentally rape a Civilian target; their thoughts being referenced immediately and/ or recorded for future use

Pulsed Microwave Technology

Pulsed microwave voice-to-skull (or other-sound-to-skull) transmission was discovered during World War II by radar technicians who found they could hear the buzz of the train of pulses being transmitted by radar equipment they were working on. This phenomenon has been studied extensively by Dr. Allan Frey, (Willow Grove, 1965) whose work has been published in a number of reference books.

What Dr. Frey found was that single pulses of microwave could be heard by some people as “pops” or “clicks”, while a train of uniform pulses could be heard as a buzz, without benefit of any type of receiver.

Dr. Frey also found that a wide range of frequencies, as low as 125 MHz (well below microwave) worked for some combination of pulse power and pulse width. Detailed unclassified studies mapped out those frequencies and pulse characteristics which are optimum for generation of “microwave hearing”.

Very significantly, when discussing electronic mind control, is the fact that the peak pulse power required is modest – something like 0.3 watts per square centimeter of skull surface, and that this power level is only applied or needed for a very small percentage of each pulse’s cycle time. 0.3-watts/sq cm is about what you get under a 250-watt heat lamp at a distance of one meter. It is not a lot of power.

When you take into account that the pulse train is off (no signal) for most of each cycle, the average power is so low as to be nearly undetectable. This is the concept of “spike” waves used in radar and other military forms of communication.

Frequencies that act as voice-to-skull carriers are not single frequencies, as, for example TV or cell phone channels. Each sensitive frequency is actually a range or “band” of frequencies. A technology used to reduce both interference and detection is called “spread spectrum”. Spread spectrum signals usually have the carrier frequency “hop” around within a specified band. Unless a receiver “knows” this hop schedule in advance, like other forms of encryption there is virtually no chance of receiving or detecting a coherent readable signal. Spectrum analyzers, used for detection, are receivers with a screen. A spread spectrum signal received on a spectrum analyzer appears as just more “static” or noise.

The actual method of the first successful unclassified voice to skull experiment was in 1974, by Dr. Joseph C. Sharp and Mark Grove, then at the Walter Reed Army Institute of Research. A Frey-type audible pulse was transmitted every time the voice waveform passed down through the zero axes, a technique easily duplicated by ham radio operators who build their own equipment. The sensation is reported as a buzzing, clicking, or hissing which seems to originate within or just behind the head. The phenomenon occurs with carrier densities as low as microwatts per square centimeter with carrier frequencies from 0.3-3.0 GHz. By proper choice of pulse characteristics, intelligent speech may be created.

Dr. James Lin of Wayne State University has written a book entitled: Microwave Auditory Effects and Applications. It explores the possible mechanisms for the phenomenon, and discusses possibilities for the deaf, as persons with certain types of hearing loss can still hear pulsed microwaves (as tones or clicks and buzzes, if words aren’t modulated on). Lin mentions the Sharp and Grove experiment and comments: “The capability of communicating directly with humans by pulsed microwaves is obviously not limited to the field of therapeutic medicine.”

“Synthetic Telepathy”

In 1975, researcher A. W. Guy stated that “one of the most widely observed and accepted biologic effects of low average power electromagnetic energy is the auditory sensation evoked in man when exposed to pulsed microwaves.”

He concluded that at frequencies where the auditory effect can be easily detected, microwaves penetrate deep into the tissues of the head, causing rapid thermal expansion (at the microscopic level only) that produces strains in the brain tissue.

An acoustic stress wave is then conducted through the skull to the cochlea, and from there, it proceeds in the same manner as in conventional hearing. It is obvious that receiver-less radio has not been adequately publicized or explained because of national security concerns.

Today, the ability to remotely transmit microwave voices inside a target’s head is known inside the Pentagon as “Synthetic Telepathy”. According to Dr. Robert Becker, “Synthetic Telepathy has applications in covert operations designed to drive a target crazy with voices or deliver undetected instructions to a programmed assassin.”

This technology may have contributed to the deaths of 25 defense scientists variously employed by Marconi Underwater and Defense Systems, Easems and GEC. Most of the scientists worked on highly sensitive electronic warfare programs for NATO, including the Strategic Defense Initiative. It is claimed that directed energy weapons might have been used to literally drive these men to suicide and 291accidents.

Biological Amplification Using Microwave Band Frequencies

The next major development in ELF weaponry was the concept of a biological amplification of these signals at the cell level to perpetuate and set up resonance for more sophisticated information transfer. This was the beginning of using more than one technology in a stack to do something “more.” While this was implied, it was never developed in “The Holographic Concept of Reality.”

Electromagnetic fields or relatively weak power levels can affect intercellular communication. Bio-amplification is apparently why radio signals of very low average power (mw) can produce audio effects, and is difficult to detect. [Electromagnetic Interaction with Biological Systems, ed. Dr. James C. Lin, Univ. of Illinois, 1989, Plenum Press, NY]

Imposed weak low frequency fields (and radio frequency fields) that are many orders of magnitude weaker in the pericellular fluid (fluid between adjacent cells) than the membrane potential gradient (voltage across the membrane) can modulate the action of hormones, antibody neurotransmitters and cancer-promoting molecules at their cell surface receptor sites.

These ELF sensitivities appear to involve nonequilibrium and highly cooperative processes that mediate a major amplification of initial weak triggers associated with the binding of these molecules (specific cell surface receptor sites). Membrane amplification is inherent in this trans-membrane signaling sequence.

Initial stimuli associated with weak perpendicular EM fields and with binding of stimulating molecules at their membrane receptor sites elicit a highly cooperative modification of Ca++ binding to glycoproteins along the membrane.

A longitudinal spread is consistent with the direction of extracellular current flow associated with physiological activity and imposed EM fields. This cooperative modification of surface Ca++ binding is an amplifying stage. By imposing RF fields, there is a far greater increase in Ca++ efflux than is accounted for in the events of receptor-ligand binding. from imposing RF fields.

Enzymes are protein molecules that function as catalysts, initiating and enhancing chemical reactions that would not otherwise occur at tissue temperatures. This ability resides in the pattern of electrical charges on the molecular surface.

Activation of these enzymes and the reactions in which they participate involve energies millions of times greater than in the cell surface, triggering events initiated by the EM fields, emphasizing the membrane amplification inherent in this trans-membrane signaling sequence.

Frey and Messenger confirmed that a microwave pulse with a slow rise time was ineffectual in producing an auditory response. Only if the rise time is short, resulting in effect in a square wave with respect to the leading edge of the envelope of radiated radio-frequency energy, does the auditory response occur. This is why we don’t “hear” ordinary radio and TV signals.

The significance of “Embryonic Holography” now becomes more understandable. For example, the specific frequency bands (0.3-3.0 Hz) are so flat as to appear almost 2-dimensional to most biological processes on a semi-quantum mechanical level. This means that these frequencies can be seen as “scalar” in their possible interaction with specific brain processes.

What these frequencies really are, however, are actual holograms of specific thoughts. They have a third component of detail (much like the patented P300 wave). This means that a hybrid form of brain fingerprinting is now possible. And, once these “images” are stored (usually in a very sophisticated super-cooled computer), similar responses can be fed back to the person, inducing virtually any state desired (via entrainment protocols).

The next two paragraphs are of particular importance and I ask the reader to take extra time to allow the consequences of these technologies used against TI and whole populations. The advent of Satellites, Mobile phone masts, HAARP and wireless technology has enabled an invisible delivery system with which to effect the minds and emotions of every person on this planet.

Silent Sound Technology – “S-quad”

Silent (converted-to-voice FM) hypnosis can be transmitted using a voice frequency modulator to generate the “voice.” It is a steady tone, near the high end of hearing range (15,000 Hz), plus a hypnotist’s voice, varying from 300 – 4,000 Hz. These two signals are frequency modulated. The output now appears as a steady tone, like tinnitus, but with hypnosis embedded. The FM-voice controls the timing of the transmitter’s pulse.

Each vertical line is one short pulse of microwave signal at a frequency to which the human brain is sensitive. Timing of each microwave pulse is controlled by each down-slope crossing of the voice wave (Sharp’s method, 1974). Then the brain converts the train of microwave pulses back to inaudible voice. There is no conscious defense possible against this form of hypnosis.

Ordinary radio and TV signals use a smooth waveform called a ‘sine’ wave. This wave signal cannot normally penetrate the voltage gradient across the nerve cell walls. Radar signals consist of very short and powerful pulses of sine wave type signals, and can penetrate the steep voltage gradient across these nerve cell walls (Allan H. Frey, Cornell University, 1962).

Differences in osmosis of ions (dissolved salt components) cause a small voltage difference across cell walls. When a small voltage appears across a very tiny distance, the change in voltage is called very ‘steep.’ It is this steep gradient that keeps normal radio signals from throwing us into convulsions.

The mind-altering mechanism is based on a subliminal carrier technology: the Silent Sound Spread Spectrum (SSSS), sometimes called “S-quad” or “Squad”. It was developed by Dr Oliver Lowery of Norcross, Georgia, and is described in US Patent #5,159,703, “Silent Subliminal Presentation System”, dated October 27, 1992. The abstract for the patent reads:

“A silent communications system in which nonaural carriers, in the very low or very high audio-frequency range or in the adjacent ultrasonic frequency spectrum are amplitude- or frequency-modulated with the desired intelligence and propagated acoustically or vibrationally, for inducement into the brain, typically through the use of loudspeakers, earphones, or piezoelectric transducers. The modulated carriers may be transmitted directly in real time or may be conveniently recorded and stored on mechanical, magnetic, or optical media for delayed or repeated transmission to the listener.”

According to literature by Silent Sounds, Inc., it is now possible, using supercomputers, to analyze human emotional EEG patterns and replicate them, then store these “emotion signature clusters” on another computer and, at will, “silently induce and change the emotional state in a human being”.

Edward Tilton, President of Silent Sounds, Inc., says this about S-quad in a letter dated December 13, 1996:

“All schematics, however, have been classified by the US Government and we are not allowed to reveal the exact details… … we make tapes and CDs for the German Government, even the former Soviet Union countries! All with the permission of the US State Department, of course… The system was used throughout Operation Desert Storm (Iraq) quite successfully.”

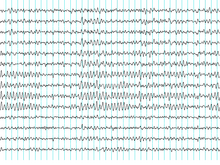

“Induced Alpha to Theta Biofeedback Cluster Movement” is an output from “the world’s most versatile and most sensitive electroencephalograph (EEG) machine”. This device has a gain capability of 200,000, as compared to most other EEG machines (with gain capability of 50,000). It is software-driven by the “fastest of computers” using a noise nulling technology similar to that used by nuclear submarines for detecting small objects underwater at extreme range.

The purpose of all this high technology is to plot and display a moving cluster of periodic brainwave signals. The illustration shows an EEG display from a single individual, taken of left and right hemispheres simultaneously. This technology is very similar to that used to generate P300 waves.

Cloning the Emotions

By using these computer-enhanced EEGs, scientists can identify and isolate the brain’s low-amplitude “emotion signature clusters,” synthesize them and store them on another computer. In other words, by studying the subtle characteristic brainwave patterns that occur when a subject experiences a particular emotion, scientists have been able to identify the concomitant brainwave pattern and can now duplicate it.

“These clusters are then placed on the Silent Sound[TM] carrier frequencies and will silently trigger the occurrence of the same basic emotion in another human being!”

Regarding system delivery and applications, there is a lot more involved here than a simple subliminal sound system. There are numerous patented technologies that can be piggybacked individually or collectively onto a carrier frequency to elicit all kinds of effects.

There appear to be two methods of delivery with the system. One is direct microwave induction into the brain of the subject, limited to short-range operations. The other, as described above, utilizes ordinary radio and television carrier frequencies.

Far from necessarily being used as a weapon against a person, the system does have limitless positive applications. However, the fact that the sounds are subliminal makes them virtually undetectable and possibly dangerous to the general public.

In more conventional use, the Silent Sounds Subliminal System might utilize voice commands, e.g., as an adjunct to security systems. Beneath the musical broadcast that you hear in stores and shopping malls may be a hidden message that exhorts against shoplifting. And while voice commands alone are powerful, when the subliminal presentation system carries cloned emotional signatures, the result is overwhelming.

Free-market uses for this technology are the common self-help tapes, positive affirmation, relaxation and meditation tapes, as well as methods to increase learning capabilities. But there is strong evidence that this technology is being developed toward global mind control. The secrecy involved in the development of the electromagnetic mind-altering technology reflects the tremendous power that is inherent in it. To put it bluntly, whoever controls this technology can control the minds of men – all men.

There is evidence that the U.S. Government has plans to extend the range of this technology to envelop all peoples, all countries. This can be accomplished, and is being accomplished, by utilizing the nearly completed HAARP project for overseas areas and the GWEN network now in place in the U.S. The U.S. Government denies all this.

Dr Michael Persinger is a Professor of Psychology and Neuroscience at Laurentian University, Ontario, Canada. His work and findings indicate that strong electromagnetic fields can and will affect a person’s brain.

“Temporal lobe stimulation can evoke the feeling of a presence, disorientation, and perceptual irregularities. It can activate images stored in the subject’s memory, including nightmares and monsters that are normally suppressed.”

As you can see the development of Mind Control Weapons has developed into the realms of WMD: Weapons of MASS MIND Destruction. Over the next few weeks I will be recounting my own personal experiences of this technology and the effects it had upon myself and my family.

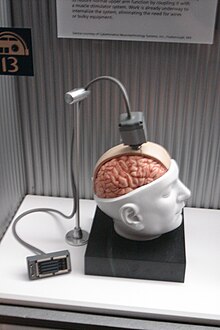

A brain–computer interface (BCI), often called a mind-machine interface (MMI), or sometimes called a direct neural interface or a brain–machine interface (BMI),

is a direct communication pathway between the brain and an external

device. BCIs are often directed at assisting, augmenting, or repairing

human cognitive or sensory-motor functions.

Research on BCIs began in the 1970s at the University of California Los Angeles (UCLA) under a grant from the National Science Foundation, followed by a contract from DARPA.[1][2] The papers published after this research also mark the first appearance of the expression brain–computer interface in scientific literature.

The field of BCI research and development has since focused primarily on neuroprosthetics applications that aim at restoring damaged hearing, sight and movement. Thanks to the remarkable cortical plasticity of the brain, signals from implanted prostheses can, after adaptation, be handled by the brain like natural sensor or effector channels.[3] Following years of animal experimentation, the first neuroprosthetic devices implanted in humans appeared in the mid-1990s.

Berger's first recording device was very rudimentary. He inserted silver wires under the scalps of his patients. These were later replaced by silver foils attached to the patients' head by rubber bandages. Berger connected these sensors to a Lippmann capillary electrometer, with disappointing results. More sophisticated measuring devices, such as the Siemens double-coil recording galvanometer, which displayed electric voltages as small as one ten thousandth of a volt, led to success.

Berger analyzed the interrelation of alternations in his EEG wave diagrams with brain diseases. EEGs permitted completely new possibilities for the research of human brain activities.

The difference between BCIs and neuroprosthetics is mostly in how the terms are used: neuroprosthetics typically connect the nervous system to a device, whereas BCIs usually connect the brain (or nervous system) with a computer system. Practical neuroprosthetics can be linked to any part of the nervous system—for example, peripheral nerves—while the term "BCI" usually designates a narrower class of systems which interface with the central nervous system.

The terms are sometimes, however, used interchangeably. Neuroprosthetics and BCIs seek to achieve the same aims, such as restoring sight, hearing, movement, ability to communicate, and even cognitive function. Both use similar experimental methods and surgical techniques.

In 1969 the operant conditioning studies of Fetz and colleagues, at the Regional Primate Research Center and Department of Physiology and Biophysics, University of Washington School of Medicine in Seattle, showed for the first time that monkeys could learn to control the deflection of a biofeedback meter arm with neural activity.[7]

Similar work in the 1970s established that monkeys could quickly learn

to voluntarily control the firing rates of individual and multiple

neurons in the primary motor cortex if they were rewarded for generating appropriate patterns of neural activity.[8]

Studies that developed algorithms to reconstruct movements from motor cortex neurons, which control movement, date back to the 1970s. In the 1980s, Apostolos Georgopoulos at Johns Hopkins University found a mathematical relationship between the electrical responses of single motor cortex neurons in rhesus macaque monkeys and the direction in which they moved their arms (based on a cosine function). He also found that dispersed groups of neurons, in different areas of the monkey's brains, collectively controlled motor commands. But he was able to record the firings of neurons in only one area at a time, because of the technical limitations imposed by his equipment.[9]

There has been rapid development in BCIs since the mid-1990s.[10] Several groups have been able to capture complex brain motor cortex signals by recording from neural ensembles (groups of neurons) and using these to control external devices. Notable research groups have been led by Richard Andersen, John Donoghue, Phillip Kennedy, Miguel Nicolelis and Andrew Schwartz.[citation needed]

In 1999, researchers led by Yang Dan at the University of California, Berkeley decoded neuronal firings to reproduce images seen by cats. The team used an array of electrodes embedded in the thalamus (which integrates all of the brain’s sensory input) of sharp-eyed cats. Researchers targeted 177 brain cells in the thalamus lateral geniculate nucleus area, which decodes signals from the retina.

The cats were shown eight short movies, and their neuron firings were

recorded. Using mathematical filters, the researchers decoded the

signals to generate movies of what the cats saw and were able to

reconstruct recognizable scenes and moving objects.[11] Similar results in humans have since been achieved by researchers in Japan (see below).

In 1999, researchers led by Yang Dan at the University of California, Berkeley decoded neuronal firings to reproduce images seen by cats. The team used an array of electrodes embedded in the thalamus (which integrates all of the brain’s sensory input) of sharp-eyed cats. Researchers targeted 177 brain cells in the thalamus lateral geniculate nucleus area, which decodes signals from the retina.

The cats were shown eight short movies, and their neuron firings were

recorded. Using mathematical filters, the researchers decoded the

signals to generate movies of what the cats saw and were able to

reconstruct recognizable scenes and moving objects.[11] Similar results in humans have since been achieved by researchers in Japan (see below).

After conducting initial studies in rats during the 1990s, Nicolelis and his colleagues developed BCIs that decoded brain activity in owl monkeys and used the devices to reproduce monkey movements in robotic arms. Monkeys have advanced reaching and grasping abilities and good hand manipulation skills, making them ideal test subjects for this kind of work.

By 2000 the group succeeded in building a BCI that reproduced owl monkey movements while the monkey operated a joystick or reached for food.[12] The BCI operated in real time and could also control a separate robot remotely over Internet protocol. But the monkeys could not see the arm moving and did not receive any feedback, a so-called open-loop BCI.

Later experiments by Nicolelis using rhesus monkeys succeeded in closing the feedback loop

and reproduced monkey reaching and grasping movements in a robot arm.

With their deeply cleft and furrowed brains, rhesus monkeys are

considered to be better models for human neurophysiology

than owl monkeys. The monkeys were trained to reach and grasp objects

on a computer screen by manipulating a joystick while corresponding

movements by a robot arm were hidden.[13][14]

The monkeys were later shown the robot directly and learned to control

it by viewing its movements. The BCI used velocity predictions to

control reaching movements and simultaneously predicted handgripping force.

Later experiments by Nicolelis using rhesus monkeys succeeded in closing the feedback loop

and reproduced monkey reaching and grasping movements in a robot arm.

With their deeply cleft and furrowed brains, rhesus monkeys are

considered to be better models for human neurophysiology

than owl monkeys. The monkeys were trained to reach and grasp objects

on a computer screen by manipulating a joystick while corresponding

movements by a robot arm were hidden.[13][14]

The monkeys were later shown the robot directly and learned to control

it by viewing its movements. The BCI used velocity predictions to

control reaching movements and simultaneously predicted handgripping force.

Donoghue's group reported training rhesus monkeys to use a BCI to track visual targets on a computer screen(closed-loop BCI) with or without assistance of a joystick.[15] Schwartz's group created a BCI for three-dimensional tracking in virtual reality and also reproduced BCI control in a robotic arm.[16] The same group also created headlines when they demonstrated that a monkey could feed itself pieces of fruit and marshmallows using a robotic arm controlled by the animal's own brain signals.[17][18][19]

Andersen's group used recordings of premovement activity from the posterior parietal cortex in their BCI, including signals created when experimental animals anticipated receiving a reward.[20]

Miguel Nicolelis and colleagues demonstrated that the activity of large neural ensembles can predict arm position. This work made possible creation of BCIs that read arm movement intentions and translate them into movements of artificial actuators. Carmena and colleagues[13] programmed the neural coding in a BCI that allowed a monkey to control reaching and grasping movements by a robotic arm. Lebedev and colleagues[14] argued that brain networks reorganize to create a new representation of the robotic appendage in addition to the representation of the animal's own limbs.

The biggest impediment to BCI technology at present is the lack of a sensor modality that provides safe, accurate and robust access to brain signals. It is conceivable or even likely, however, that such a sensor will be developed within the next twenty years. The use of such a sensor should greatly expand the range of communication functions that can be provided using a BCI.

Development and implementation of a BCI system is complex and time consuming. In response to this problem, Dr. Gerwin Schalk has been developing a general-purpose system for BCI research, called BCI2000. BCI2000 has been in development since 2000 in a project led by the Brain–Computer Interface R&D Program at the Wadsworth Center of the New York State Department of Health in Albany, New York, USA.

A new 'wireless' approach uses light-gated ion channels such as Channelrhodopsin to control the activity of genetically defined subsets of neurons in vivo. In the context of a simple learning task, illumination of transfected cells in the somatosensory cortex influenced the decision making process of freely moving mice.[22]

Invasive BCI research has targeted repairing damaged sight and

providing new functionality for people with paralysis. Invasive BCIs are

implanted directly into the grey matter

of the brain during neurosurgery. As they rest in the grey matter,

invasive devices produce the highest quality signals of BCI devices but

are prone to scar-tissue build-up, causing the signal to become weaker or even lost as the body reacts to a foreign object in the brain.

Invasive BCI research has targeted repairing damaged sight and

providing new functionality for people with paralysis. Invasive BCIs are

implanted directly into the grey matter

of the brain during neurosurgery. As they rest in the grey matter,

invasive devices produce the highest quality signals of BCI devices but

are prone to scar-tissue build-up, causing the signal to become weaker or even lost as the body reacts to a foreign object in the brain.

In vision science, direct brain implants have been used to treat non-congenital (acquired) blindness. One of the first scientists to produce a working brain interface to restore sight was private researcher William Dobelle.

Dobelle's first prototype was implanted into "Jerry", a man blinded in adulthood, in 1978. A single-array BCI containing 68 electrodes was implanted onto Jerry’s visual cortex and succeeded in producing phosphenes, the sensation of seeing light. The system included cameras mounted on glasses to send signals to the implant. Initially, the implant allowed Jerry to see shades of grey in a limited field of vision at a low frame-rate. This also required him to be hooked up to a two-ton mainframe computer, but shrinking electronics and faster computers made his artificial eye more portable and now enable him to perform simple tasks unassisted.[23]

In 2002, Jens Naumann, also blinded in adulthood, became the first in

a series of 16 paying patients to receive Dobelle’s second generation

implant, marking one of the earliest commercial uses of BCIs. The second

generation device used a more sophisticated implant enabling better

mapping of phosphenes into coherent vision. Phosphenes are spread out

across the visual field in what researchers call "the starry-night

effect". Immediately after his implant, Jens was able to use his

imperfectly restored vision to drive an automobile slowly around the parking area of the research institute.

In 2002, Jens Naumann, also blinded in adulthood, became the first in

a series of 16 paying patients to receive Dobelle’s second generation

implant, marking one of the earliest commercial uses of BCIs. The second

generation device used a more sophisticated implant enabling better

mapping of phosphenes into coherent vision. Phosphenes are spread out

across the visual field in what researchers call "the starry-night

effect". Immediately after his implant, Jens was able to use his

imperfectly restored vision to drive an automobile slowly around the parking area of the research institute.

Researchers at Emory University in Atlanta, led by Philip Kennedy and Roy Bakay, were first to install a brain implant in a human that produced signals of high enough quality to simulate movement. Their patient, Johnny Ray (1944–2002), suffered from ‘locked-in syndrome’ after suffering a brain-stem stroke in 1997. Ray’s implant was installed in 1998 and he lived long enough to start working with the implant, eventually learning to control a computer cursor; he died in 2002 of a brain aneurysm.[24]

Tetraplegic Matt Nagle became the first person to control an artificial hand using a BCI in 2005 as part of the first nine-month human trial of Cyberkinetics’s BrainGate chip-implant. Implanted in Nagle’s right precentral gyrus (area of the motor cortex for arm movement), the 96-electrode BrainGate implant allowed Nagle to control a robotic arm by thinking about moving his hand as well as a computer cursor, lights and TV.[25] One year later, professor Jonathan Wolpaw[who?] received the prize of the Altran Foundation for Innovation to develop a Brain Computer Interface with electrodes located on the surface of the skull, instead of directly in the brain.

Electrocorticography (ECoG) measures the electrical activity of the brain taken from beneath the skull in a similar way to non-invasive electroencephalography (see below), but the electrodes are embedded in a thin plastic pad that is placed above the cortex, beneath the dura mater.[26] ECoG technologies were first trialed in humans in 2004 by Eric Leuthardt and Daniel Moran from Washington University in St Louis. In a later trial, the researchers enabled a teenage boy to play Space Invaders using his ECoG implant.[27] This research indicates that control is rapid, requires minimal training, and may be an ideal tradeoff with regards to signal fidelity and level of invasiveness.

(Note: these electrodes had not been implanted in the patient with the intention of developing a BCI. The patient had been suffering from severe epilepsy and the electrodes were temporarily implanted to help his physicians localize seizure foci; the BCI researchers simply took advantage of this.)[citation needed]

Signals can be either subdural or epidural, but are not taken from within the brain parenchyma itself. It has not been studied extensively until recently due to the limited access of subjects. Currently, the only manner to acquire the signal for study is through the use of patients requiring invasive monitoring for localization and resection of an epileptogenic focus.

ECoG is a very promising intermediate BCI modality because it has higher spatial resolution, better signal-to-noise ratio, wider frequency range, and less training requirements than scalp-recorded EEG, and at the same time has lower technical difficulty, lower clinical risk, and probably superior long-term stability than intracortical single-neuron recording. This feature profile and recent evidence of the high level of control with minimal training requirements shows potential for real world application for people with motor disabilities.[28][29]

Light Reactive Imaging BCI devices are still in the realm of theory. These would involve implanting a laser inside the skull. The laser would be trained on a single neuron and the neuron's reflectance measured by a separate sensor. When the neuron fires, the laser light pattern and wavelengths it reflects would change slightly. This would allow researchers to monitor single neurons but require less contact with tissue and reduce the risk of scar-tissue build-up.[citation needed]

Research into synthetic telepathy using subvocalization is taking place at the University of California, Irvine under lead scientist Mike D'Zmura. The first such communication took place in the 1960s using EEG to create Morse code using brain alpha waves. Using EEG to communicate imagined speech is less accurate than the invasive method of placing an electrode between the skull and the brain. [31]

Electroencephalography (EEG) is the most studied potential non-invasive interface, mainly due to its fine temporal resolution, ease of use, portability and low set-up cost. But as well as the technology's susceptibility to noise,

another substantial barrier to using EEG as a brain–computer interface

is the extensive training required before users can work the technology.

For example, in experiments beginning in the mid-1990s, Niels Birbaumer

at the University of Tübingen in Germany trained severely paralysed people to self-regulate the slow cortical potentials in their EEG to such an extent that these signals could be used as a binary signal to control a computer cursor.[32] (Birbaumer had earlier trained epileptics

to prevent impending fits by controlling this low voltage wave.) The

experiment saw ten patients trained to move a computer cursor by

controlling their brainwaves. The process was slow, requiring more than

an hour for patients to write 100 characters with the cursor, while

training often took many months.

Electroencephalography (EEG) is the most studied potential non-invasive interface, mainly due to its fine temporal resolution, ease of use, portability and low set-up cost. But as well as the technology's susceptibility to noise,

another substantial barrier to using EEG as a brain–computer interface

is the extensive training required before users can work the technology.

For example, in experiments beginning in the mid-1990s, Niels Birbaumer

at the University of Tübingen in Germany trained severely paralysed people to self-regulate the slow cortical potentials in their EEG to such an extent that these signals could be used as a binary signal to control a computer cursor.[32] (Birbaumer had earlier trained epileptics

to prevent impending fits by controlling this low voltage wave.) The

experiment saw ten patients trained to move a computer cursor by

controlling their brainwaves. The process was slow, requiring more than

an hour for patients to write 100 characters with the cursor, while

training often took many months.

Another research parameter is the type of oscillatory activity that is measured. Birbaumer's later research with Jonathan Wolpaw at New York State University has focused on developing technology that would allow users to choose the brain signals they found easiest to operate a BCI, including mu and beta rhythms.

A further parameter is the method of feedback used and this is shown in studies of P300 signals. Patterns of P300 waves are generated involuntarily (stimulus-feedback) when people see something they recognize and may allow BCIs to decode categories of thoughts without training patients first. By contrast, the biofeedback methods described above require learning to control brainwaves so the resulting brain activity can be detected.

Lawrence Farwell and Emanuel Donchin developed an EEG-based brain–computer interface in the 1980s.[33] Their "mental prosthesis" used the P300 brainwave response to allow subjects, including one paralyzed Locked-In syndrome patient, to communicate words, letters and simple commands to a computer and thereby to speak through a speech synthesizer driven by the computer. A number of similar devices have been developed since then. In 2000, for example, research by Jessica Bayliss at the University of Rochester showed that volunteers wearing virtual reality helmets could control elements in a virtual world using their P300 EEG readings, including turning lights on and off and bringing a mock-up car to a stop.[34]

While an EEG based brain-computer interface has been pursued extensively by a number of research labs, recent advancements made by Bin He and his team at the University of Minnesota suggest the potential of an EEG based brain-computer interface to accomplish tasks close to invasive brain-computer interface. Using advanced functional neuroimaging including BOLD functional MRI and EEG source imaging, Bin He and co-workers identified the co-variation and co-localization of electrophysiological and hemodynamic signals induced by motor imagination.[35] Refined by a neuroimaging approach and by a training protocol, Bin He and co-workers demonstrated the ability of a non-invasive EEG based brain-computer interface to control the flight of a virtual helicopter in 3-dimensional space, based upon motor imagination.[36]

In addition to a brain-computer interface based on brain waves, as recorded from scalp EEG electrodes, Bin He and co-workers explored a virtual EEG signal-based brain-computer interface by first solving the EEG inverse problem and then used the resulting virtual EEG for brain-computer interface tasks. Well-controlled studies suggested the merits of such a source analysis based brain-computer interface.[37]

The electrode was tested on an electrical test bench and on human subjects in four modalities of EEG activity, namely: (1) spontaneous EEG, (2) sensory event-related potentials, (3) brain stem potentials, and (4) cognitive event-related potentials. The performance of the dry electrode compared favorably with that of the standard wet electrodes in terms of skin preparation, no gel requirements (dry), and higher signal-to-noise ratio.[39]

In 1999 researchers at Case Western Reserve University, in Cleveland, Ohio, led by Hunter Peckham, used 64-electrode EEG skullcap to return limited hand movements to quadriplegic Jim Jatich. As Jatich concentrated on simple but opposite concepts like up and down, his beta-rhythm EEG output was analysed using software to identify patterns in the noise. A basic pattern was identified and used to control a switch: Above average activity was set to on, below average off. As well as enabling Jatich to control a computer cursor the signals were also used to drive the nerve controllers embedded in his hands, restoring some movement.[40]

Experiments by Eduardo Miranda, at the University of Plymouth in the UK, has aimed to use EEG recordings of mental activity associated with music to allow the disabled to express themselves musically through an encephalophone.[42] Ramaswamy Palaniappan has pioneered the development of BCI for use in biometrics to identify/authenticate a person.[43] The method has also been suggested for use as PIN generation device (for example in ATM and internet banking transactions.[44] The group which is now at University of Wolverhampton has previously developed analogue cursor control using thoughts.[45]

Researchers at the University of Twente in the Netherlands have been conducting research on using BCIs for non-disabled individuals, proposing that BCIs could improve error handling, task performance, and user experience and that they could broaden the user spectrum.[46] They particularly focused on BCI games,[47] suggesting that BCI games could provide challenge, fantasy and sociality to game players and could, thus, improve player experience.[48]

The Emotiv company has been selling a commercial video game controller, known as The Epoc, since December 2009. The Epoc uses electromagnetic sensors.[49][50]

The first BCI session with 100% accuracy (based on 80 right hand and 80 left hand movement imaginations) was recorded in 1998 by Christoph Guger. The BCI system used 27 electrodes overlaying the sensorimotor cortex, weighted the electrodes with Common Spatial Patterns, calculated the running variance and used a linear discriminant analysis.[51]

Research is ongoing into military use of BCIs and since the 1970s DARPA has been funding research on this topic.[1][2] The current focus of research is user-to-user communication through analysis of neural signals.[52] The project "Silent Talk" aims to detect and analyze the word-specific neural signals, using EEG, which occur before speech is vocalized, and to see if the patterns are generalizable.[53]

Magnetoencephalography (MEG) and functional magnetic resonance imaging (fMRI) have both been used successfully as non-invasive BCIs.[54] In a widely reported experiment, fMRI allowed two users being scanned to play Pong in real-time by altering their haemodynamic response or brain blood flow through biofeedback techniques.[55]

Magnetoencephalography (MEG) and functional magnetic resonance imaging (fMRI) have both been used successfully as non-invasive BCIs.[54] In a widely reported experiment, fMRI allowed two users being scanned to play Pong in real-time by altering their haemodynamic response or brain blood flow through biofeedback techniques.[55]

fMRI measurements of haemodynamic responses in real time have also been used to control robot arms with a seven second delay between thought and movement.[56]

In 2008 research developed in the Advanced Telecommunications Research (ATR) Computational Neuroscience Laboratories in Kyoto, Japan, allowed the scientists to reconstruct images directly from the brain and display them on a computer. The article announcing these achievements was the cover story of the journal Neuron of 10 December 2008.[57] While the early results are limited to black and white images of 10x10 squares (pixels), according to the researchers further development of the technology may make it possible to achieve color images, and even view or record dreams.[58][59]

In 2011 researchers from UC Berkeley published[60] a study reporting second-by-second reconstruction of videos watched by the study's subjects, from fMRI data. This was achieved by creating a statistical model relating visual patterns in videos shown to the subjects, to the brain activity caused by watching the videos. This model was then used to look up the 100 one-second video segments, in a database of 18 million seconds of random YouTube videos, whose visual patterns most closely matched the brain activity recorded when subjects watched a new video. These 100 one-second video extracts were then combined into a mashed-up image that resembled the video being watched.[61][62][63]

However, since Neurogaming is still in its first stages, not much is written about the new industry. Due to this, the first NeuroGaming Conference will be held in San Francisco on May 1-2, 2013[69].

Philip Kennedy founded Neural Signals in 1987 to develop BCIs that would allow paralysed patients to communicate with the outside world and control external devices. As well as an invasive BCI, the company also sells an implant to restore speech. Neural Signals' "Brain Communicator" BCI device uses glass cones containing microelectrodes coated with proteins to encourage the electrodes to bind to neurons.

Although 16 paying patients were treated using William Dobelle's vision BCI, new implants ceased within a year of Dobelle's death in 2004. A company controlled by Dobelle, Avery Biomedical Devices, and Stony Brook University are continuing development of the implant, which has not yet received Food and Drug Administration approval for human implantation in the United States.[70]

Ambient, at a TI developers conference in early 2008, demonstrated a product they have in development call The Audeo. The Audeo aims to create a human–computer interface for communication without the need of physical motor control or speech production. Using signal processing, unpronounced speech can be translated from intercepted neurological signals.[71]

Mindball is a product, developed and commercialized by the Swedish company Interactive Productline, in which players compete to control a ball's movement across a table by becoming more relaxed and focused.[72] Interactive Productline's objective is to develop and sell easily understandable EEG products that train the ability to relax and focus.[73]

An Austrian company called Guger Technologies or[74] g.tec, has been offering Brain Computer Interface systems since 1999. The company provides base BCI models as development platforms for the research community to build upon, including the P300 Speller, Motor Imagery, and Steady-State Visual Evoked Potential. g.tec recently developed the g.SAHARA dry electrode system, which can provide signals comparable to gel-based systems.[75]

Spanish company Starlab, entered this market in 2009 with a wireless 4-channel system called Enobio. In 2011 Enobio 8 and 20 channel (CE Medical) was released and is now commercialised by Starlab spin-off Neuroelectrics Designed for medical and research purposes the system provides an all in one solution and a platform for application development.[76]

There are three main consumer-devices commercial-competitors in this area (launch date mentioned in brackets) which have launched such devices primarily for gaming- and PC-users:

Development of the first working neurochip was claimed by a Caltech team led by Jerome Pine and Michael Maher in 1997.[78] The Caltech chip had room for 16 neurons.

In 2003 a team led by Theodore Berger, at the University of Southern California, started work on a neurochip designed to function as an artificial or prosthetic hippocampus. The neurochip was designed to function in rat brains and was intended as a prototype for the eventual development of higher-brain prosthesis. The hippocampus was chosen because it is thought to be the most ordered and structured part of the brain and is the most studied area. Its function is to encode experiences for storage as long-term memories elsewhere in the brain.[79]

Thomas DeMarse at the University of Florida used a culture of 25,000 neurons taken from a rat's brain to fly a F-22 fighter jet aircraft simulator.[80] After collection, the cortical neurons were cultured in a petri dish and rapidly began to reconnect themselves to form a living neural network. The cells were arranged over a grid of 60 electrodes and used to control the pitch and yaw functions of the simulator. The study's focus was on understanding how the human brain performs and learns computational tasks at a cellular level.

Researchers are well aware that sound ethical guidelines, appropriately moderated enthusiasm in media coverage and education about BCI systems will be of utmost importance for the societal acceptance of this technology. Thus, recently more effort is made inside the BCI community to create consensus on ethical guidelines for BCI research, development and dissemination.[84]

http://io9.com/5038464/army-sinks-millions-into-synthetic-telepathy-research

http://phys.org/news137863959.html

http://www.mindpowerworld.com/synthetic-telepathy-mind-reading-technology

http://en.wikipedia.org/wiki/Brain%E2%80%93computer_interface

http://cnslab.ss.uci.edu/muri/research.html

http://deepthought.newsvine.com/_news/2010/05/21/4322682-synthetic-telepathy-the-hidden-truth

http://drowninginabsurdity.wordpress.com/2012/11/24/artificialsynthetic-telepathy-and-mind-control-2/

http://www.indymedia.org.uk/en/2010/05/451768.html

Synthetic Telepathy – Mind Reading Technology

college researchhttp://en.wikipedia.org/wiki/List_of_institutions_granting_degrees_in_cognitive_science#India

http://en.wikipedia.org/wiki/Humboldt-University_of_Berlin

http://en.wikipedia.org/wiki/Max_Planck_Institute_for_Human_Cognitive_and_Brain_Sciences

http://www.bio.uni-freiburg.de/

http://www.bbci.de/contact

http://www.uni-heidelberg.de/studium/interesse/faecher/biomed-eng.html

http://www.manyagroup.com/top-universities-in-germany

http://www.ehow.com/list_6510286_top-engineering-universities-germany.html

http://www.ehow.com/list_6603559_list-technical-universities-germany.html

Artificial/Synthetic Telepathy And Mind Control

By Lily Morgan, March 31, 2012

Special thanks to Lily Morgan for letting me re-post this here. Technologies that violate the sacred space of an individual’s mind are more prolific than people realize. Becoming familiar with these technologies is the first step to recognizing their pattern of influence—not just as they may apply to yourself or friends and family, but also in the wider net of Perception Management throughout the alternative and new-age community as well. Uses and abuses of this technology are no longer reserved for unaware military and/or black op(s) employees, or MILAB abductees. This form of victimization is heinous, cruel, and wrong at every level of human decency. The original article can be found at the following link: http://emvsinfo.blogspot.de/2012/03/artificialsynthetic-telepathy-and-mind.html

The full article is below. –Crystal Clark, November 24th, 2012

In

order to have an understanding of my personal observation of my own

experience with Mind Control and Synthetic Telepathy it is vital that

the reader familiarizes themselves with some background information and

technical details on the development of Mind Control and Electromagnetic

Weapons. I ask this because these technologies can and are being used

against TI’s (Targeted Individuals) and whole populations on a global

scale, if not currently then plans for the future are being prepared,

tested and perfected. As such, the first part of this article is devoted

to providing quotes from other people’s research and their personal

experiences. Had I not already spent some time making myself aware of

these subjects, when I became a TI myself, I would not have had a

knowledge base to eventually build a picture of what I was consciously

aware of at the time, as it was happening to me. The consequences of the

application of these technologies in the wrong hands are horrifying and

inhumane.

In

order to have an understanding of my personal observation of my own

experience with Mind Control and Synthetic Telepathy it is vital that

the reader familiarizes themselves with some background information and

technical details on the development of Mind Control and Electromagnetic

Weapons. I ask this because these technologies can and are being used

against TI’s (Targeted Individuals) and whole populations on a global

scale, if not currently then plans for the future are being prepared,

tested and perfected. As such, the first part of this article is devoted

to providing quotes from other people’s research and their personal

experiences. Had I not already spent some time making myself aware of

these subjects, when I became a TI myself, I would not have had a

knowledge base to eventually build a picture of what I was consciously

aware of at the time, as it was happening to me. The consequences of the

application of these technologies in the wrong hands are horrifying and

inhumane.Artificial

Made or produced by human beings rather than occurring naturally, typically as a copy of something natural.

Telepathy

“Telepathy” is derived from the Greek terms tele (“distant”) and pathe (“occurrence” or “feeling”). The term was coined in 1882 by the French psychical researcher Fredric W. H. Myers, a founder of the Society for Psychical Research (SPR).

Mind Control

Mind control (also known as brainwashing, coercive persuasion, mind abuse, thought control, or thought reform) refers to a process in which a group or individual “systematically uses unethically manipulative methods to persuade others to conform to the wishes of the manipulator(s), often to the detriment of the person being manipulated”

Mind Rape

When Mind reading and mind control are used against a person it is sometimes refered to as mind rape. The reason is that in general mind reading is not used to observe but instead to control a person in illegal ways or to inflict maximum damage (including death) to a person. Mind rape occurs whenever one’s brain feels as though it has been assaulted viciously by some event or thing in reality and when someone can convince and manipulate someone’s thoughts and therefore their actions.

Love Bombing

“Mind-control techniques such as love-bombing are designed to bypass a person’s intelligence and especially his critical-thinking skills. When a person suddenly receives an overwhelming amount of love and acceptance, it is extremely difficult for them to stand back and assess the reasons for this or question something they desperately don’t want to have disappear.” Love Bombing is a technique widely used to initially entice, and then to later control and manipulate.

MIND CONTROL WEAPON

The term “Mind control” basically means covert attempts to influence the thoughts and behavior of human beings against their will (or without their knowledge), particularly when surveillance of an individual is used as an integral part of such influencing and the term “Psychotronic Torture” comes from psycho (of psychological) and electronic. This is actually a very sophisticated form of remote technological torture that slowly invalidates and incapacitates a person. These invisible and non-traceable technological assaults on human beings are done in order to destroy someone psychologically and physiologically. Actually, as per scientific resources, the human body, much like a computer, contains myriad data processors. They include, but are not limited to, the chemical-electrical activity of the brain, heart, and peripheral nervous system, the signals sent from the cortex region of the brain to other parts of our body, the tiny hair cells in the inner ear that process auditory signals, and the light-sensitive retina and cornea of the eye that process visual activity. We are on the threshold of an era in which these data processors of the human body may be manipulated or debilitated. http://www.cyberbrain.se/?page_id=112

Mind Control and Electromagnetic Weapons

Many Thanks for in-depth and invaluable information kindly quoted from these sites: http://www.stopeg.com/mindrape.html http://www.nwbotanicals.org/oak/newphysics/synthtele/synthtele.html I strongly recommend further reading as I have only been able to briefly touch upon some of the information available on the net with regards Mind Control.

General introduction to mind reading, mind control, mind rape

Mind control is a controversial subject most importantly because more disinformation has been released about mind control than with any other subject. Mind control is very real however and was performed by our national secret services yesterday and is performed by our national secret services today!

Mind control is about the controlling the mind and can have a lot of appearances. You might be controlled by subjective propaganda in your favorite news paper, or on your favorite television channel. But you may be brainwashed by certain drugs or voices beamed into your head because some people do not like you. A lot has been written on this subject. I quote parts of some books, reports, documents below, for more information just google the internet.

From THE RAPE OF THE MIND by Joost A. M. Meerloo:

The rape of the mind and stealthy mental coercion are among the oldest crimes of mankind. They probably began back in pre historic days when man first discovered that he could exploit human qualities of empathy and understanding in order to exert power over his fellow men. The word “rape” is derived from the Latin word _rapere_, to snatch, but also is related to the words to rave and raven. It means to overwhelm and to enrapture, to invade, to usurp, to pillage and to steal. The modern words “brainwashing,” “thought control,” and “menticide” serve to provide a clearer conception of the actual methods by which man’s integrity can be violated. When a concept is given its right name, it can be more easily recognized and it is with this recognition that the opportunity for systematic correction begins. From Terms Other Than Mind Control: One problem with the term “mind control” is the “kook” association. This association/stereotype is reinforced in some of the popular culture — as well as by certain victims (or provocateurs) who sound “crazy.” [There are cointelpro-style provocateurs who want to keep the real victims discredited, if possible, because they work as a damage control unit for the victimizers.] Many other people encountering the term “mind control” are just citizens who are purposely kept ignorant of the known and documented history of mind control — as well as the state of the technology right now.

From Terms Other Than Mind Control:

One problem with the term “mind control” is the “kook” association. This association/stereotype is reinforced in some of the popular culture — as well as by certain victims (or provocateurs) who sound “crazy.” [There are cointelpro-style provocateurs who want to keep the real victims discredited, if possible, because they work as a damage control unit for the victimizers.] Many other people encountering the term “mind control” are just citizens who are purposely kept ignorant of the known and documented history of mind control — as well as the state of the technology right now.

From Hearing Voices from the blog Artificial Telepathy

The problem is that artificial telepathy provides the perfect weapon for mental torture and information theft. It provides an extremely powerful means for exploiting, harassing, controlling, and raping the mind of any person on earth. It opens the window to quasi-demonic possession of another person’s soul.

When used as a “nonlethal” weapons system it becomes an ideal means for neutralizing or discrediting a political opponent. Peace protesters, inconvenient journalists and the leaders of vocal opposition groups can be stunned into silence with this weapon. Artificial telepathy also offers an ideal means for complete invasion of privacy. If all thoughts can be read, then Passwords, PIN numbers, and personal secrets simply cannot be protected. One cannot be alone in the bathroom or shower. Embarrassing private moments cannot be hidden: they are subject to all manner of hurtful comments and remarks. Evidence can be collected for blackmail with tremendous ease: all the wrongs or moral lapses of one’s past are up for review.

From Operation Mind Control (exerpts from) by Walter H. Bowart

The CIA succeeded in developing a whole range of psycho-weapons to expand its already ominous psychological warfare arsenal. With these capabilities, it was now possible to wage a new kind of war—a war which would take place invisibly, upon the battlefield of the human mind. … [p. 19]

Mind control is the most terrible imaginable crime because it is committed not against the body, but against the mind and the soul. Dr. Joost A. M. Meerloo expresses the attitude of the majority of psychologists in calling it ‘mind rape,’ and warns that it poses a great ‘danger of destruction of the spirit’ which can be ‘compared to the threat of total physical destruction . . .’ … [p. 23]

This technology has been around for many years in the U.S. A lot of people, have been used as guinea pigs for testing. It is a satellite delivered EEG. Many people, have lost everything as a result, family, employment,etc. The EEG was developed in 1920. BRAIN WAVE MONITORS / ANALYZERS (MIND THOUGHT DECIPHERING)

Lawrence Pinneo, a neurophysiologist and electronic engineer working for Stanford Research Institute (a military contractor) is the first “known” pioneer in this field. In 1974, he developed a computer system which correlated brain waves on an electroencephalograph with specific commands.

In the early 1990s, Dr. Edward Taub reported that words could be communicated onto a screen using the thought-activated movements of the computer cursor. How is it done?

The magnetic field around the head, the brain waves of an individual can be monitored by satellite. The transmitter is therefore the brain itself just as body heat is used for “Iris” satellite tracking (infrared) or mobile phones or bugs can be tracked as “transmitters.” In the case of the brain wave monitoring the results are then fed back to the relevant computers. Monitors then use the information to conduct “conversation” where audible Neurophone input is “applied” to the target / victim.

OTHER KNOW TECHNOLOGIES CURRENTLY UNDER SECRECY ORDERS BRAINWAVE SCANNERS / PROGRAMS: First program developed in 1994 by Dr. Donald York and Dr. Thomas Jensen. In 1994, the brain wave patterns of 40 subjects were officially correlated with both spoken words and silent thought. This was achieved by a neurophysiologist, Dr. Donald York, and a speech pathologist, Dr. Thomas Jensen, from the University of Missouri. They clearly identified 27 words, / syllables in specific brain wave patterns and produced a computer program with a brain wave vocabulary.

Using lasers / satellites, and high-powered computers, the agencies have now gained the ability to decipher human thoughts – a from a considerable distance. (instantaneously)

DESCRIPTION: As personal scanning and tracking system involving the monitoring of an individual EMF via remote means; e.g. Satellite. The results are fed to thought activated computers that possess a complete brainwave vocabulary.

PURPOSE: Practically, communication with stroke victims and brain-activated control of modern jets are two applications. However, more often, it is used to mentally rape a Civilian target; their thoughts being referenced immediately and/ or recorded for future use

Pulsed Microwave Technology

Pulsed microwave voice-to-skull (or other-sound-to-skull) transmission was discovered during World War II by radar technicians who found they could hear the buzz of the train of pulses being transmitted by radar equipment they were working on. This phenomenon has been studied extensively by Dr. Allan Frey, (Willow Grove, 1965) whose work has been published in a number of reference books.